TTA has an open invitation to industry leaders to contribute to our Perspectives non-promotional opinion area. Today’s Perspectives is from Deepak Singh, a thought leader in AI and telehealth. In his work, he builds AI-powered healthtech and telehealth solutions that can reach from big cities to remote areas of the world. With double master’s degrees in business and information systems, he has 10 years of experience in product development, management, and design ranging from telecom to multimedia and from IT solutions to enterprise healthcare platforms. This article discusses how artificial intelligence (AI) and machine learning (ML) can accelerate the global growth of telemedicine, including a consideration of risks and possible solutions.

TTA has an open invitation to industry leaders to contribute to our Perspectives non-promotional opinion area. Today’s Perspectives is from Deepak Singh, a thought leader in AI and telehealth. In his work, he builds AI-powered healthtech and telehealth solutions that can reach from big cities to remote areas of the world. With double master’s degrees in business and information systems, he has 10 years of experience in product development, management, and design ranging from telecom to multimedia and from IT solutions to enterprise healthcare platforms. This article discusses how artificial intelligence (AI) and machine learning (ML) can accelerate the global growth of telemedicine, including a consideration of risks and possible solutions.

Introduction

The ongoing technological advancements have led the way towards greater opportunities for the growth of the global health business, particularly telemedicine through increased connections via the internet, robotics, data analytics, and cloud technology that will further drive innovation over the next ten years. It is obvious that artificial intelligence (AI) usage plays a noteworthy part in the maneuvering and execution of medical technologies when considering the bulky amount of data handling needed by healthcare, the requirement for consistent accuracy in complex procedures, and the rising demand for healthcare services.

Telemedicine is the practice of performing consultations, medical tests and procedures, and remote medical professional collaborations through interactive digital communication. Telemedicine is an open science that is constantly growing as it embraces new technological developments and reacts to and adapts to the shifting social circumstances and health demands. The primary goals of telemedicine are to close the accessibility and communication gaps in four fields: teleconsultation, which is having all kinds of physical and mental health consultations without an in-person visit to a medical facility; teleradiology, which uses information and communication technologies (ICT) to transmit digital radiological images (such as X-ray images) from one place to another; telepathology, which uses ICT to transmit digitized pathological results; and teledermatology, which uses ICT to transmit medical information about skin conditions.

AI has been progressively implied in the field of telemedicine. AI deals with machine learning (ML) that discloses complex connections that are hard to figure out in an equation. In a way that is similar to the human brain and neural networks that encrypt data using an enormous number of interconnected neurons, ML systems can approach difficult problem-solving in the same way that a doctor might do by carefully analyzing the available data and drawing valid judgments.

A growing understanding of artificial intelligence and data analytics can help to broaden its reach and capabilities. Telemedicine’s goal is to boost productivity and organize experience, information, and manpower based on need and urgency and it can be augmented by the use of AI and ML.

Evolving application of AI and ML in Telemedicine

In order to enable clinicians to make more data-driven, immediate decisions that could enhance the patient experience and health outcomes, AI is being employed in telemedicine more and more. The use of AI in healthcare is a potential approach for telemedicine applications in the future.

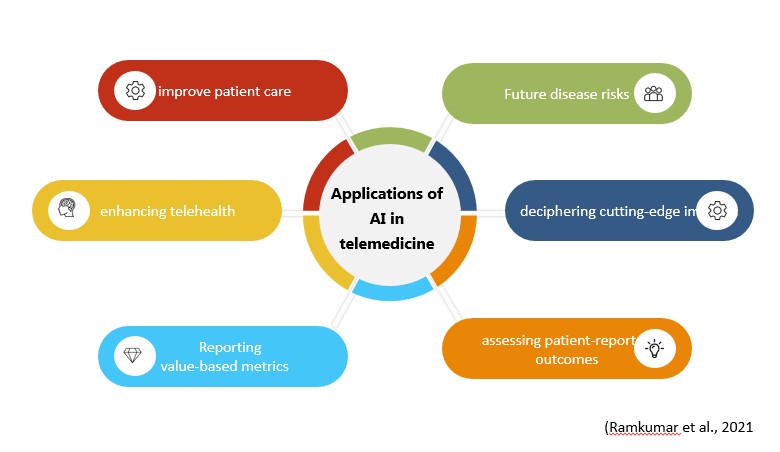

Al and ML were able to bring about the necessary revolution in so many sectors due to their competence, increased productivity, and flawless execution of tasks. AI is now surpassing the boundaries of being a mere theory and stepping into a practical domain where the need for human supervision for the execution of jobs by machines will be minimized all due to the presence of enormous datasets along with an increment in the processing power of that data. A computer-based algorithm that uses AI has the ability to analyze any form of input data such as ‘training sets’ using pattern recognition which eventually predicts as well as categorize the output, all of that is beyond the scope of human processing or analytical powers that uses traditional statistical approaches. In the field of telemedicine, the adoption of AI and ML still has to go a long way till its vital concepts are understood and applied likewise, nevertheless, the current scenario gives a promising picture where many research projects have applied AI to predict the risk of future disease incidence, decrypting cutting-edge imaging, evaluating patient-reported results, recording value-based metrics, and improving telehealth. The perspective to mechanize tasks and improve data-driven discernments may be comprehended by profoundly improving patient care with obligation, attentiveness, and proficiency in prompting AI.

Drawbacks of artificial intelligence in telemedicine (more…)

Most Recent Comments